Meta Andromeda: real revolution in ad delivery or black box with better marketing?

Andromeda radically expanded how many ads compete for each impression on Meta, feeding Advantage+ with more data and more options. The operational response depends on team size, budget, and tolerance for concentration risk.

Andromeda radically expanded how many ads compete for each impression on Meta, feeding Advantage+ with more data and more options. This can improve results for some advertisers, but it also concentrates more power in a system that the advertiser cannot audit or verify independently. The operational response depends on team size, budget, and tolerance for concentration risk on a single platform.

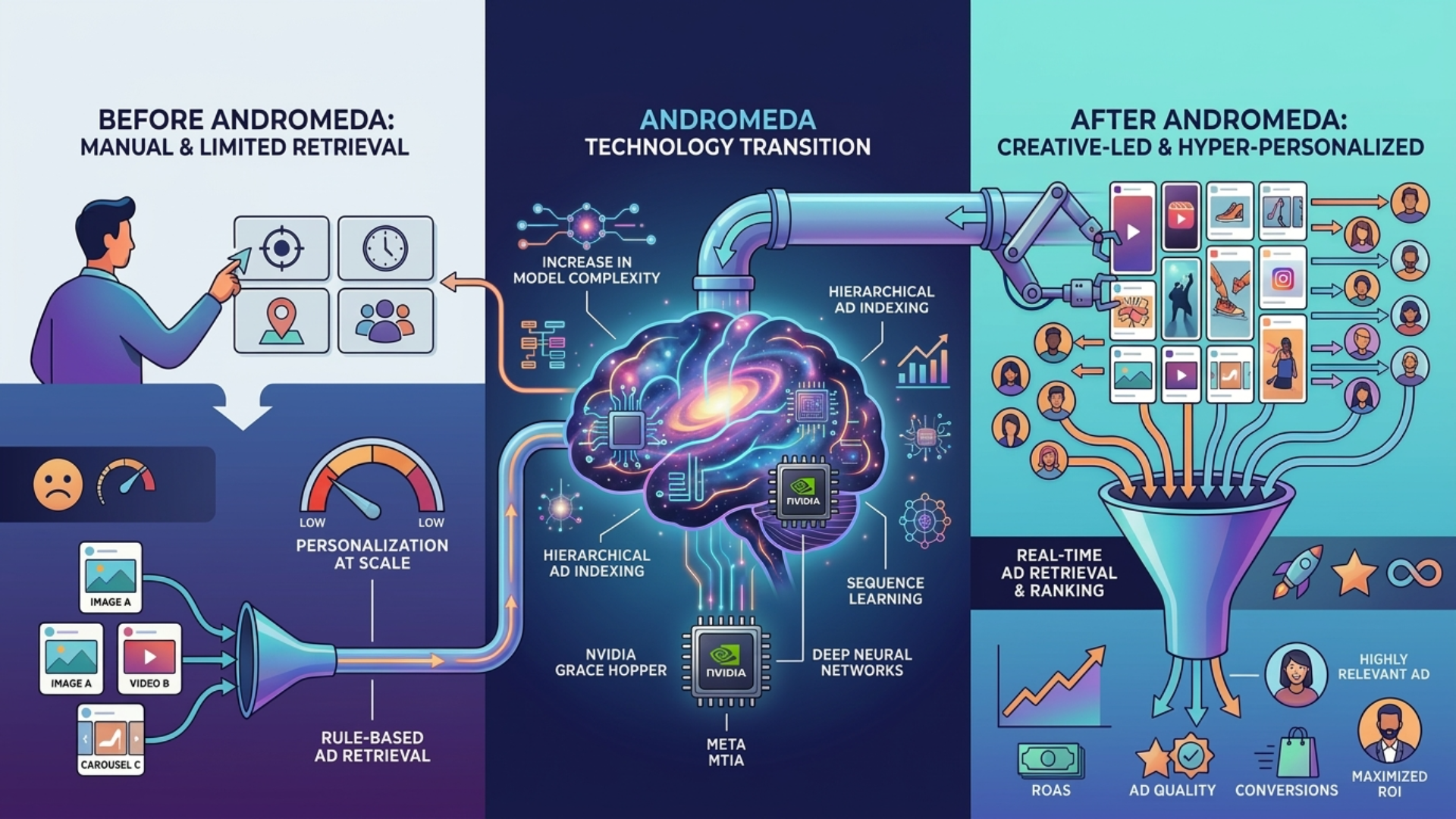

Meta introduced Andromeda in 2023 as a replacement for its previous ad retrieval system and has continued iterating on it through 2026. According to the paper published by Meta AI Research, Andromeda uses a dense retrieval model based on embeddings that can evaluate tens of thousands of candidate ads per impression. The previous system operated with a more restrictive pre-filtering funnel that discarded most ads before they reached the ranking model. Andromeda changed that logic: instead of filtering first and evaluating later, it represents both the user and the ads in a shared vector space and calculates latent relevance, which allows it to consider candidates that explicit targeting would have excluded.

This change did not occur in isolation. It coincided with Meta's aggressive push toward Advantage+, its suite of automated campaigns that consolidates targeting, placements, and bidding under an algorithmic umbrella. In the Q4 2025 earnings call, Mark Zuckerberg attributed part of the 22% year-over-year growth in ad revenue ($46.8 billion in the quarter) to AI-driven improvements in the ads system. The official narrative is clear: the system is smarter, the ads are more relevant, everyone wins.

The reality is more nuanced. That the system is technically superior at the infrastructure level does not mean results improve for every individual advertiser. Andromeda optimizes the ads marketplace as a whole, and in a system with more than 10 million active advertisers, the efficiency of the system and the efficiency of each participant are not the same thing.

What changed in the delivery engine

To understand the operational impact, you need to separate three layers of Meta's delivery system: retrieval (which ads are considered), ranking (how they are ordered), and the final auction (who wins the impression and at what price). Andromeda operates at the first layer.

Before Andromeda, the system used aggressive filtering at the retrieval stage that left out most ads before the ranking model could evaluate them. Andromeda expanded that pool using dense embeddings that capture user behavior signals and ad characteristics in a shared space. What this means in practice is that segmentation configured manually by the media buyer loses relative weight in the delivery decision. An ad can reach the auction not because it matches the advertiser's audience criteria, but because the model detected latent relevance based on patterns the advertiser did not configure and cannot see. This is the technical mechanism behind the experience many teams report: that Advantage+ broad audiences sometimes outperform manually segmented ones.

Prices and causality: what the data says and what it does not

Meta reported that the average price per ad grew 14% in Q4 2025 and attributed it to "improved ad quality and relevance." It is tempting to connect this increase directly with Andromeda: more candidates competing in each auction should, in theory, raise prices. But the causal attribution is more complex than it appears. Q4 always concentrates higher ad spend due to seasonality (Black Friday, holiday season), and CPMs historically rise between 20% and 40% in competitive categories during this period. The number of active advertisers on Meta continues growing (over 10 million), generating more competition for the same inventory. And improvements in the prediction model, which better estimates the expected value of each impression, also justify higher prices independently of Andromeda.

What the data does allow us to say is that the general trend is consistent with a system that increases competition per impression. WordStream documented in its 2025 benchmark that the average CPC on Meta Ads rose 17% compared to 2024 in competitive categories, while CTR improved marginally (3%). More engagement at higher cost is a pattern that fits with broader retrieval, but it is not definitive proof. Honesty requires acknowledging that public data does not allow isolating Andromeda's effect from other market factors.

Advantage+: polarized results, reduced visibility

Data from the advertiser community reflects a marked polarization. Some report CPAs up to 30% lower with Advantage+ Shopping compared to manual campaigns. Others see inflated CPAs with no possibility of diagnosis, because Advantage+ limits breakdowns by audience, placement, and in many cases by creative performance at a granular level. The pattern that emerges is not binary (it works or it does not): it works in ways that the advertiser cannot independently verify.

A phenomenon that deserves attention is what happens with advertisers who choose not to migrate. Although Meta has not declared that it penalizes manual campaigns, several teams have documented gradual reach declines in manual campaigns running in parallel with Advantage+, especially from mid-2025 onward. The causes could include changes in the inventory mix or redistribution of impressions toward formats Meta prioritizes (such as Reels). The perception in the community is that Meta is creating indirect incentives for migration, making the status quo progressively less viable. Public data does not resolve this question with certainty, but it is relevant enough to monitor actively.

What to do in practice: creative, signals, measurement

The battlefield shifted from "who do I show the ad to" to "how good is my creative and my landing page." The concrete answer varies depending on available resources.

For teams with budgets under $15K/month and two to three people, the minimum viable creative testing involves producing between 5 and 8 creative variants per campaign every two weeks, prioritizing copy and format variations (static vs short video) over complex production. The early metric to watch is the "thumbstop rate" (percentage of users who stop scrolling): when it drops below 25% on video or CTR falls more than two percentage points from baseline, it is time to rotate. For teams with greater capacity (budgets of $50K+ and 4 or more people), the volume can scale to 15 to 20 variants per cycle, incorporating landing page testing as a second lever. A/B tests on the landing page with tools like Google Optimize or VWO can improve conversion rates between 10% and 30% without touching the media budget.

The second pillar is conversion signal quality. Configuring Meta's Conversions API (CAPI) requires server-side integration that can take between one and four weeks depending on the tech stack. For teams without a full-time developer, native integrations (Shopify, WooCommerce, Stape.io) simplify implementation from weeks to days. These are not nice-to-haves: they are infrastructure that determines the quality of the data Advantage+ uses to optimize, and therefore the quality of the results.

The third pillar is independent measurement, and this is where the operational gap is largest. With Advantage+, audience breakdowns disappear or become limited, and the ability to triangulate with external tools is reduced. To validate results without relying exclusively on Meta's reporting, there are three approaches with varying complexity. The most accessible is the holdout test: deactivate Advantage+ in a geographic or temporal segment and compare results controlling for seasonality, with at least two weeks of data and a minimum of $5K per segment to reach statistical significance. The second is the geo-lift test: compare similar regions with and without Advantage+ active, measuring the increment in verified conversions. The third, for teams with data science capabilities or access to specialized consulting, is to incorporate Meta's results into a Media Mix Model (MMM) that estimates the real incremental contribution of each platform. Zenda's note on Marketing Mix Modeling offers a starting point for evaluating whether this approach is viable for each team's profile.

Incentives: aligned or divergent?

The central tension is not technical but about incentives. Meta operates a marketplace where it is simultaneously the infrastructure operator, an economic participant (through its own inventory of placements), and the sole provider of performance data. This triple position existed before Andromeda, but the new system deepens it: by expanding the candidate pool and moving selection logic toward opaque models, the advertiser's dependence on Meta's word intensifies.

The official argument is that incentives are aligned: more relevant ads generate more interaction, which benefits users, advertisers, and Meta. It is logically coherent but incomplete. "Better results" for Meta means maximizing total system revenue, not necessarily the ROI of each individual advertiser. In a market with growing demand for inventory, the system can generate more aggregate revenue even if the individual return for some advertisers deteriorates. This is not Meta conspiring against its clients: it is that the system's objective function and the advertiser's are not identical, and Andromeda makes it harder to detect when they diverge.

Governance: the dimension media teams do not see

For enterprise profiles with regulatory obligations, the opacity has a dimension that transcends performance. If Andromeda decides which audiences to show an ad to based on signals the advertiser cannot audit, the brand safety question ceases to be purely reputational. In markets with regulations like LGPD in Brazil or the Federal Data Protection Law in Mexico, an advertiser that cannot explain how audiences were selected faces a real, though indirect, regulatory risk. Meta argues that algorithmic targeting complies with regulations because the platform processes data internally. But this defense depends on regulators accepting that total delegation of targeting to an opaque third party does not create co-responsibility. It is unresolved legal territory that compliance teams should be monitoring.

Calibrate the dependency, do not "negotiate" with Meta

The advertiser's real options are not "negotiating with Meta" in the traditional sense (the platform imposes conditions and the advertiser decides whether to stay or leave). The operational question is calibrating the degree of dependency. That can mean maintaining 20% to 30% of the budget in manual campaigns as a control and benchmark, implementing independent measurement with the approaches described above, and diversifying part of the budget toward other channels.

On diversification, an important disclaimer: the alternatives are not necessarily more transparent. Google Performance Max operates with a comparable black box, and TikTok Ads has its own attribution challenges that in some cases are worse. The decision to diversify should not be based on fleeing opacity (which is ubiquitous in the current paid media ecosystem) but on reducing concentration risk. If more than 50% of the paid budget depends on a single platform, any unilateral change on that platform can affect the business disproportionately.

This analysis makes sense for paid media teams that depend significantly on Meta and are evaluating (or have already made) the migration to Advantage+. It is most relevant for those managing budgets starting at $5K/month in LATAM who need to understand not only how to operate in the new paradigm but how to measure whether the results Meta reports are real.

For small teams (post-seed startups, 2 to 3 people, budgets under $15K/month), the main value lies in the minimum creative testing framework and the accessible alternatives for configuring conversion signals. Do not expect a detailed playbook by vertical: that exceeds the scope of this note and depends on each business's specific context.

For Heads of Paid Media and Performance Leads with teams of 4+ people and budgets of $50K+/month, the value lies in the independent measurement approaches and in the articulation of the incentive conflict, which can serve as a basis for internal conversations about concentration risk.

For Marketing Directors and CMOs with board reporting obligations, the governance analysis and diversification discussion offer language and arguments for contextualizing why the "good results" reported by Meta deserve additional scrutiny. A complete channel diversification framework, however, is a topic for another note.

If Meta is not a significant channel in the current media mix, this note has little operational value.

The exact objective function of Andromeda in production is not known. Meta's paper describes the architecture but does not detail the loss function or how advertiser objectives are balanced against platform objectives. There is no independent audit of Meta's delivery system comparable to what researchers have done with Google Ads, where biases in Smart Bidding have been documented.

It is also unclear how Andromeda interacts with the pricing system. Meta has migrated from a pure second-price auction model to more complex variants, and there is no public documentation on how the expansion of the candidate pool affects dynamic pricing in practice.

The question of whether Meta implicitly penalizes advertisers who do not migrate to Advantage+ is one of the most relevant and one with the least verifiable data. Anecdotal reports suggest a pattern, but without a controlled study it is impossible to distinguish between deliberate penalization and natural redistribution of impressions toward automated formats.

There are no public data on minimum budgets for Advantage+ to effectively complete its learning phase. The thresholds circulating in the community ($100/day as minimum, $250/day as ideal) have no official backing from Meta.

Advantage+ performance data is predominantly self-reported by Meta or anecdotal from individual advertisers. There is no independent meta-analysis comparing performance while controlling for vertical, budget, and region. Until that exists, evaluating Andromeda necessarily depends on partial data, community reports, and Meta's own publications.

Content assisted by AI and critiqued by three people who cannot agree on anything. This is the result.